Broadcom Storage and PCI Express

PCI has traditionally been an important part of the storage ecosystem, starting well before the deployment of the current serial PCI Express (PCIe) standard. The bus, and it's follow-on standards, were heavily used as the backbone for the computer industry, especially storage systems, so it was not a surprise when PCIe was rapidly accepted in that market, since the software model allowed existing applications to be used seamlessly, while offering scalability and a significant performance improvement with a smaller board footprint.

Today, PCIe is used throughout mainstream storage products to:

- Aggregate storage devices

- Connect the communication subsystems to the storage subsystems

- Offer mid-plane or backplane pathways for storage appliances and storage servers

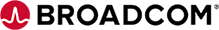

- Enable failover, redundancy, and mirroring of storage systems

There is a revolution going on in the storage market today, and given its strong penetration into current storage platforms, PCIe is well positioned to expand its role. With processors increasing in performance and capability, the storage subsystems were becoming a bottleneck due to the latency associated with spinning disk (HDD) storage media. When Non-volatile memory (FLASH) offered the promise of creating a persistent memory capability with much higher performance and lower latency, the storage category of Solid State Drive (SSD) was born. Although rapidly growing PCIe-based Flash memory designs are the industries new sweetheart, the lion’s share of today’s data center storage remains in HDD designs where cost plays a major role. SSDs costs are rapidly going down and closing cost/GB gap between SSDs and HDDs but most observers believe that the two technologies will continue to co-exist for a while.

The initial SSDs were meant to be integrated into the existing ecosystem, and thus the controllers were offered with standard storage interfaces, such as Fibre Channel, SATA & SAS. The interfaces that were designed for HDD parameters – with lower bandwidth and higher latency – were an imperfect fit for the SSDs. This was especially true in the enterprise market, where the end user is willing to pay a premium for higher performance.

Since PCIe was the most common interface in enterprise processors and storage subsystems, SSD controllers started to offer PCIe as the interface to the SSD devices. This reduced the latency and improved the performance of systems, since there was no need to bridge back and forth between intermediate technologies, and offered higher bandwidth based on PCIe signaling rates.

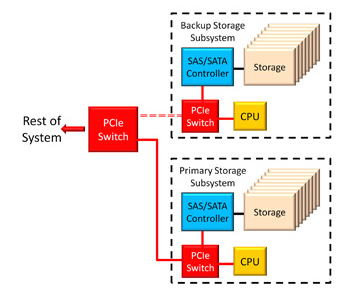

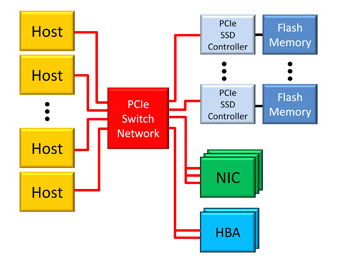

Since the individual controllers could not offer the Flash memory capacity necessary for actual high performance systems, PCIe switches were used to aggregate multiple Flash memory subsystems. This has now become widespread in enterprise-class SSD deployments, and is expected to grow over the next several years as SSDs penetrate more deeply into the market. And though the capabilities of both the flash devices and controllers will improve moving forward, the need for more storage has always been insatiable, so aggregation will continue to be necessary – and ultimately grow.

Storage Standards for PCIe

The expectation of a rapidly growing PCIe-based SSD market is shown clearly by the amount of effort in the standards bodies organizations to enable existing storage pipes to be replaced by PCIe. This includes NVM Express (NVMe), SCSI over PCIe (SOP), and SATA Express as protocols, as well as SSD-based form factors such as SFF-8639. These are primarily coming from the storage community itself, exposing a desire to be ready for the solid state revolution.

And the PCI-SIG, the group that is responsible for the PCIe standard, is also extending the specification to enable more robust storage systems with new features such as Downstream Port Containment (DPC) and enhanced DPC (eDPC), which allow the processing systems to more gracefully handle "surprise-down" or serious error conditions on storage devices connected to PCIe switches or bridges.

Hybrid Storage Systems

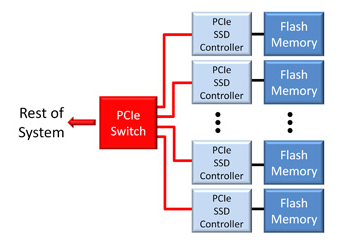

Although SSDs offer higher performance than spinning media, they are more expensive, and are expected to remain so for the foreseeable future. But it turns out that systems can be built out of a large amount of HDD storage, and a relatively smaller amount of SSD memory, but achieve similar performance to an all-SSD system at a much lower cost. These hybrid systems are now being offered by many storage system vendors, and these, too, will likely proliferate. PCIe switches play a key role in these designs.

First, the storage appliance or server is likely to be connected using a PCIe-based switch network, especially in the enterprise space. So it is efficient to just add more ports to the existing backbone, and allow the HDD & SSD subsystems to communicate with the processors, and each other, using an incremental approach.

The other opportunity presents itself because the SSDs have quickly moved to a PCIe-based interface, and there are initiatives being investigated to offer a similar interface to HDDs. Although HDD devices do not benefit as much as SSDs from the direct connection to PCIe, this approach does offer a convenient, consolidated, interface to the storage system. And even HDD-based storage benefits from lower latency and higher bandwidth, especially as they increase their performance to keep up with SSD competition. Once the intermediate interfaces - such as SAS and SATA - have been updated to PCI Express, hybrid HDD/SSD systems can more easily be created, with PCIe switches distributed throughout the network - aggregating, consolidating, and routing the storage data regardless of the underlying technology.

This is a unique opportunity for the industry.

Taking PCIe Out of the Box

So far, we have been discussing the situation in largely bounded systems, with most of the interaction happening inside the box. Once the basic data path is deployed with PCIe, and this is happening rapidly already, it becomes very straightforward to take the data outside the box and remain within the PCIe protocol. The great value of extending the connection outside the box, without intermediate protocol bridging, is that the software model becomes much easier than the status quo, and you eliminate the latency, cost, and complication associated with translating to - for example - Ethernet, merely to send it to another box.

PLX has already demonstrated the ability to connect boxes using PCIe using both copper and optical connections via low cost, industry standard approaches such as QSFP+ & mini-SAS HD.

Building Fabric-Based Storage Systems

The use of PCIe in storage - at every level - has sufficient benefit and momentum that it appears inevitable, and most industry observers have predicted a rapid increase in its use. But there is an equally exciting opportunity for PCIe-based storage as the data center opens its eyes to innovative architectures.

PLX has described the advantages and possibilities of using PCIe as the converged fabric at the rack level (See this page for an explanation of ExpressFabric ), and once this becomes more common the opportunity for storage is even more compelling. If your fabric is based on PCIe, then adding PCIe-based SSD (or HDD) storage becomes as easy as plugging in a blade with arrays of storage, all providing a direct connection to the underlying memory technology with the lowest possible latency and complication.

Summary

PCIe was originally envisioned as a high speed mechanism to allow components to connect to each other with high performance and low latency. It has been enhanced, scaled and accelerated since then to offer that value to every type of market, and storage has been one of the main beneficiaries. This continues to expand in enterprise storage systems with new and exciting designs.